In 2022, something changed. A launch quietly occurred, and within five days, it had garnered a million users. Within two months, it had a hundred million. Nothing in the history of consumer technology had ever grown that fast.

People were not just curious. They were eager and unsettled. Because this thing, this AI, could write, reason, explain, and argue. It could draft a legal brief, debug a codebase, or break down a complex concept in plain language. And it did all of that by doing one thing exceptionally well: understanding and generating language.

That moment kicked off what many are now calling the most consequential Change in technology since the internet. At the centre of it all is a class of AI systems known as Large Language Models (LLMs).

You have probably used one. You may not know what it is. And that gap, between usage and understanding, is exactly where things get interesting.

LLMs are the engines powering a new class of AI tools. Claude. ChatGPT. Gemini. Grok. Behind every one of them is an LLM, a system trained to understand and generate human language at a scale that, frankly, still feels a little surreal.

An LLM is not magic. It is math. And once you understand what it does, the technology becomes a lot less alien , and a lot more useful.

So, What Exactly Is a Large Language Model?

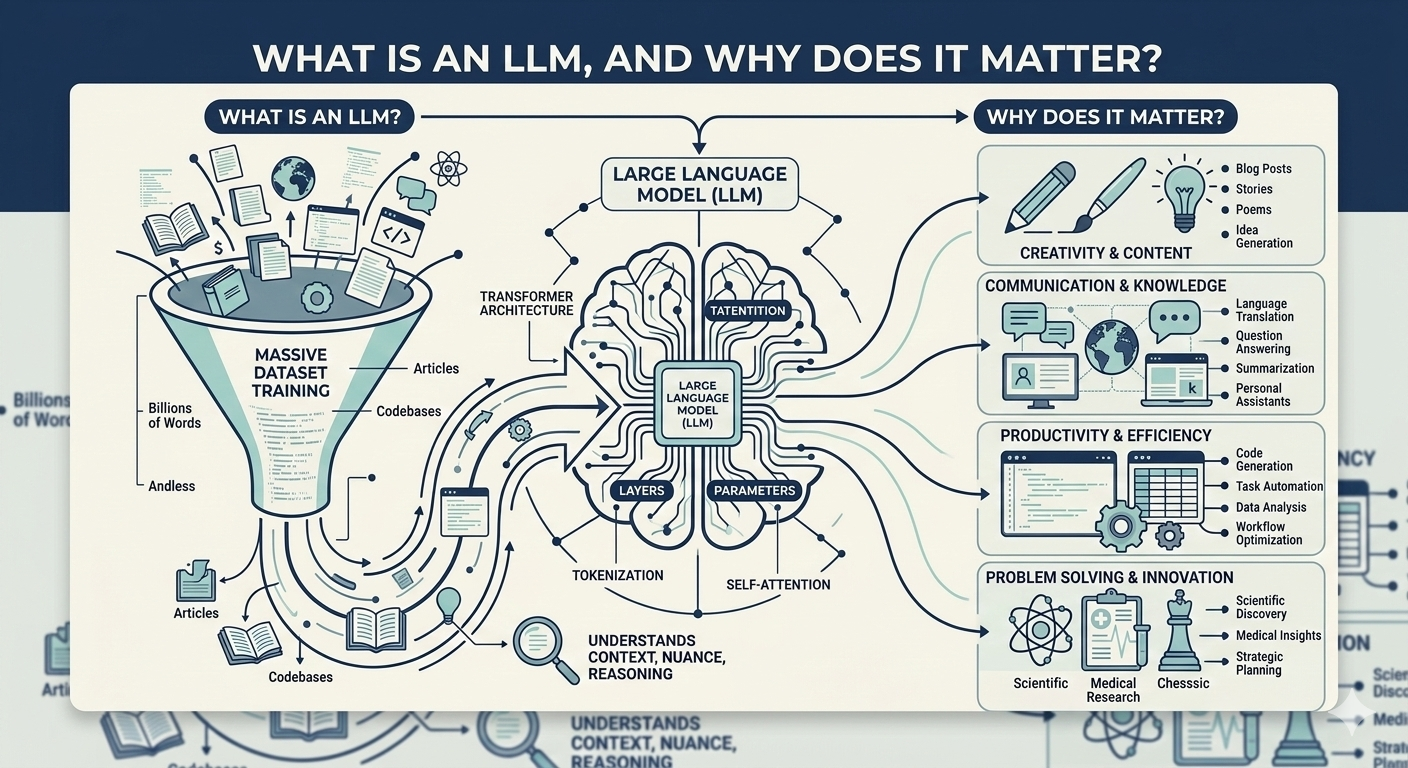

Think of an LLM as a prediction machine that has been trained on a whole lot of text, books, articles, code, research papers, forum threads, and more. Through that training, it learns one fundamental skill: given a sequence of words, what word is most likely to come next?

That may sound simple but it’s not. Doing this well at scale, across topics, in multiple languages, with nuance and context, requires processing billions of parameters and running on infrastructure most of us will never see.

The “large” in Large Language Model refers to two things: the size of the training data and the number of parameters the model uses to encode what it has learned. We are talking about models with hundreds of billions of parameters. That scale is what separates today’s LLMs from earlier, narrower language tools.

Claude: A Case Study in What This Looks Like in Practice

Claude, built by Anthropic, is one of the clearest examples of how LLM technology translates into a real product. What makes Claude worth studying is not just what it can dobut the thinking behind how it was built.

Anthropic was founded by former members of OpenAI with a specific focus: AI safety. That focus shaped Claude in a meaningful way. Rather than training the model purely to be helpful or capable, Anthropic built Claude with a framework around honesty, avoiding harm, and behaving in broadly beneficial ways. They call this Constitutional AI, essentially, a set of principles baked into the training process itself.

The result is a model that will push back when it disagrees with you. That will flag uncertainty instead of confidently hallucinating. That is designed to feel less like a tool that says yes to everything and more like a thoughtful collaborator with actual opinions.

For people who work in content, research, writing, or product, that distinction matters.

How an LLM Actually Processes Your Prompt

When you type a message into Claude or any other LLM-powered tool, here is roughly what happens.

Your input gets broken into tokens, small chunks of text, usually a word or part of a word. Those tokens are converted into numbers, which the model processes through a neural network architecture called a transformer. The transformer is the key innovation that made modern LLMs possible. It allows the model to understand context, not just the word you wrote, but its relationship to everything else in your prompt.

The model then generates a response token by token, each one predicted based on everything that came before it. That is why phrasing your prompts clearly matters so much. You are essentially setting the conditions for that prediction chain.

What LLMs Are Good At and Where They Fall Short

LLMs are impressive at tasks that involve language, drafting, summarising, explaining, translating, rewriting, coding, and reasoning through problems step by step. They are especially powerful when you give them clear context and a specific goal.

But they have real limitations. They do not truly “know” things the way humans do , they have learned patterns from data, not facts from lived experience. They can hallucinate. They can be confidently wrong. And without the right guardrails, they can reflect the biases embedded in the text they were trained on.

Why This Matters Beyond Tech

LLMs are not just a developer story. They are already embedded in the tools that writers, marketers, founders, researchers, and support teams use every day. The question is not whether this technology will affect your work , it already is. The question is whether you understand it well enough to use it intentionally.

Understanding what an LLM is, how it works, what it optimises for, and where its limits are , is the difference between someone who uses AI reactively and someone who uses it strategically.

The machine thinks in language. And if you work in language, that makes this your space too.

The Bigger Picture: We Are Still Early

The LLMs we are using today are not the finished product. They are version one. The models being developed now are faster, more context-aware, and increasingly capable of reasoning across longer and more complex tasks. Every few months, something ships that would have seemed impossible the year before.

What does that mean for the average person, the writer, the founder, the analyst, the marketer? It means the window to learn how to use these tools well is right now. Not because AI will replace what you do, but because the people who understand it will always have an edge over those who do not.

Claude is a useful lens here because Anthropic is one of the few labs building openly about the long-term questions: What does a trustworthy AI actually look like? How do you build something powerful without it becoming dangerous? These are not abstract philosophical debates. They are engineering decisions being made right now, and they will shape how this technology evolves over the next decade.

The internet changed how we accessed information. LLMs are changing how we work with them, how we write, synthesise, build, and communicate. That change is already underway. The only real choice left is whether you are going to understand it or just be carried along by it.